AI Regulation & Recruitment

The laws are live. The obligations are real.

Here's everything staffing and recruitment firms need to know (and actually do) to use AI confidently in a world where the rules are being written in real time.

-1.png?width=600&height=600&name=Untitled%20design%20(3)-1.png)

Let's start here...

There's a reasonable chance AI is screening your candidates right now

Maybe it's ranking CVs. Maybe it's summarising your interview notes. Maybe it's scoring a candidate's engagement with your application portal. If you're using Bullhorn with any AI-powered tooling (and most firms reading this are), AI is almost certainly touching your hiring process.

Here's the thing: most of your candidates don't know that. Hardly any candidate communications cover it. And in a growing number of jurisdictions, that's no longer a minor oversight, it's legal exposure.

We've pulled this guide together to help you, not to scare you. We're not a law firm, and we're not going to pretend the regulatory picture is clearer or simpler than it is. What we can do is help cut through the noise, tell you what's actually live now, what's coming, and what practical steps will make your business more compliant and more trusted - without needing to hire a dedicated AI ethics team.

Let's start with the question that trips people up most.

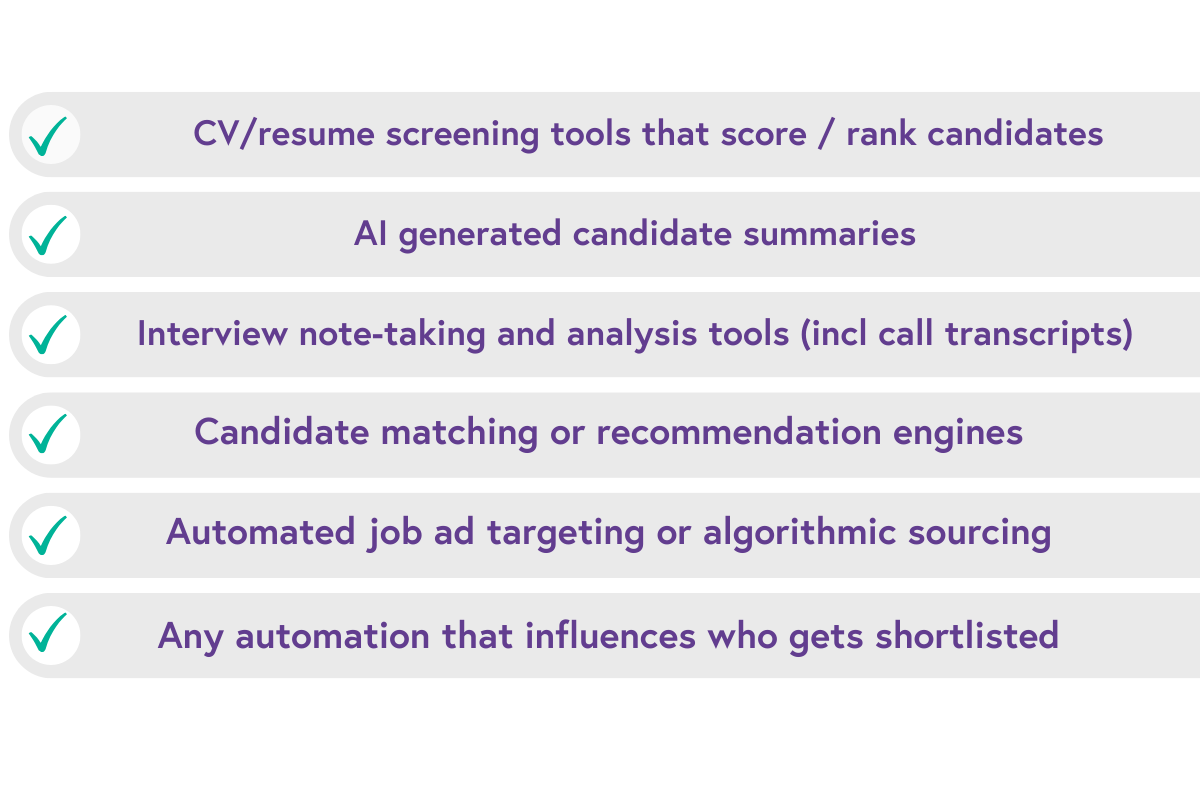

What actually counts as AI in hiring?

More than you probably think. The broad definition used across most AI hiring legislation is:

"Any machine-based system that generates outputs, predictions, recommendations, decisions, or content that influence employment decisions."

In practice, for a typical Bullhorn customer, that can mean a lot of things. ➡️

Notice what's not on the list?

The AI tools you've explicitly signed up for and actively use. everyday. The regulations don't care whether you think of a tool as "just a notetaker" or "only making suggestions." If it has the potential to or actively influences a hiring decision, it's in scope.

And yes, that almost certainly includes features you haven't thought about. The AI-generated job description assistant in your ATS. The interview scheduling tool that ranks candidate availability. The email ranking that puts some candidates at the top of your inbox. All of it potentially counts.

Where we are now...

The regulatory landscape: a map, not a maze

The good news is that the underlying principles across most AI hiring regulation are consistent. Transparency, human oversight, non-discrimination and accountability keep appearing in law after law, jurisdiction after jurisdiction. The bad news is that the specific requirements, timelines and enforcement mechanisms vary enough to make a simple "one and done" compliance approach impossible.

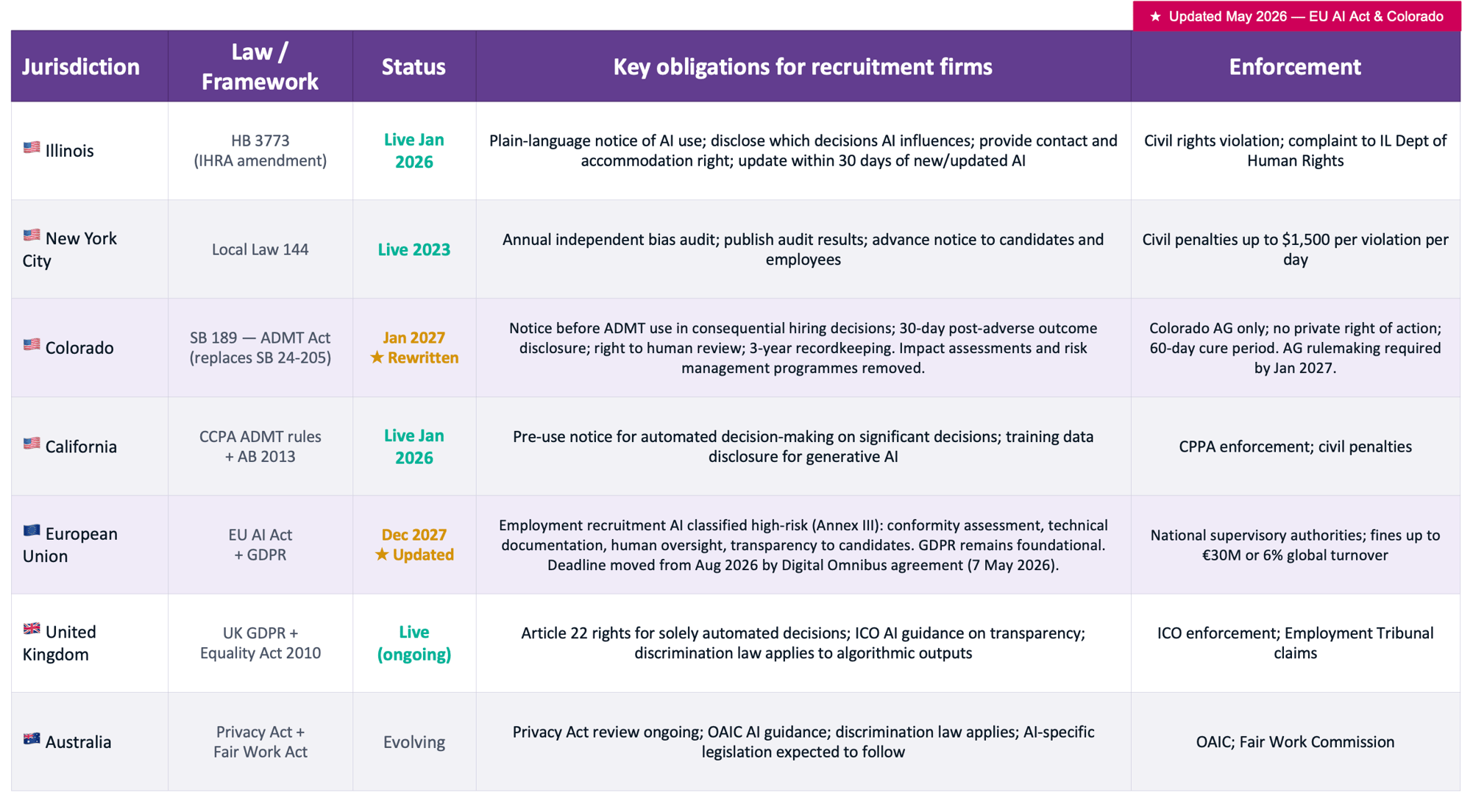

Here's the honest state of play as of May 2026:

Two things worth noting here...

First, the US doesn't have a federal AI law, it is a growing patchwork of state laws, each with different triggers and requirements. In late 2025, President Trump signed an executive order directing the DoJ to challenge state AI laws deemed inconsistent with a "minimally burdensome" national framework. That's live political pressure, but it's not a compliance lifeline. Illinois is live right now. Colorado that was originally due in June 2026 has been substantially amended and pushed back to Jan 2027 (updated May 2026) . The smart move is to make sure you are compliant, don't rely on a possible federal intervention that may never materialise.

Second, on 7 May 2026, the EU Parliament and Council agreed to push back the EU AI Act's high-risk obligations - including for employment and recruitment AI - from August 2026 to December 2027. The agreement still needs formal ratification, but that's the new planning date. That's a useful extension. It is not a green light to stop preparing. The delay happened because the technical standards needed for compliance weren't ready in time, not because the obligations are going away. The AI Act is still in force. GDPR still applies to every piece of candidate data your AI touches, right now. The firms that use this extra time to get their governance in order will be in a much stronger position than those who treat it as permission to do nothing.

The practical bit...

Five things every staffing firm should do right now

We’re already past the exploration and consideration stage, the risks are real and it’s critical to take action now. These five actions will move you from exposed to confident, regardless of your size, location or how many AI tools you're currently using.

1. Audit your AI touchpoints

Map every place AI influences a hiring or employment decision (CV screening, candidate ranking, interview summarisation, chatbots, automated comms, scheduling tools). All of it is in scope under most regulations.

The firms that get into trouble aren't necessarily the ones using the most AI; they're the ones who haven't stopped to look at what they're actually running.

If you're a Bullhorn customer, start with your integrations list. If a tool says it uses AI anywhere in its marketing, it counts

2. Ask your vendors the hard questions

Your vendor sold you the tool. Your vendor's compliance documentation is their problem. But the legal liability, in whichever jurisdiction you operate, falls on you as the employer or their agent.

Ask each AI vendor: which employment decisions does your tool influence? What personal data does it process? Have you conducted bias testing, and can you provide documentation? What's your process when you substantially update the model? If they can't answer clearly, that's your answer and your risk..

3. Write your Glass Box statement

Don't let legal draft this alone. Write it in plain English, as if you're explaining it to a candidate face to face. "We use AI to summarise CVs and flag skills matches against job requirements. The AI does not make the final decision — a recruiter does." That's a Glass Box statement.

"We may use AI tools in our process" is not, this is more like frosted glass, and regulators are specifically looking for the difference. We cover this in more detail in The Glass Box Principle.

4. Update your candidate and employee notifications

Illinois requires notice of which employment decisions AI influences, the purpose of the system, what data it collects, a point of contact for questions, and the right to request a reasonable accommodation.

It must be in plain language, accessible in languages commonly spoken in your workforce, and updated within 30 days of adopting a new or substantially changed AI tool.

Even if you're not operating in Illinois yet, this is the gold standard to aim for and it's coming to most jurisdictions anyway. Use our template notice as a starting point.

5. Assign someone to own AI governance

Compliance doesn't happen in documents, it happens when your team clearly understand what's required and takes responsibility for it.

Someone in your firm needs to own AI governance: monitoring regulatory changes, managing your AI tool inventory, updating your notices, and being the point of contact if a candidate asks a question.

This doesn't need to be a full-time role. but it does need to be somebody's actual job, not an afterthought.

There's a compliance issue that almost nobody talks about, but it sits directly underneath everything else: your AI is only as compliant as the data it's working with. If your Bullhorn database has duplicate candidate records, outdated consent flags, missing fields or legacy data from before your current privacy policies, your AI is processing that data too. Cleaning your data isn't just good housekeeping, it's fundamentable to defensible AI use. This is exactly why Kyloe DataTools exists. More on that in the Kyloe section below.

Digging deeper...

The regional realities: where your candidates are matters

The table above gives you the overview. Here's the substance behind each major jurisdiction. We update these sections as regulations evolve.

USA

The US doesn't have one AI law. It has many, with more coming. The current approach from the federal government is innovation first, which in practice means states and cities are the ones writing enforceable rules. For recruitment firms operating nationally or with candidates in multiple states, you need to build your compliance programme to the most demanding requirements in your footprint.

Illinois HB 3773, in force since 1 January 2026, is the current benchmark. It's worth reading carefully because it has some features that distinguish it from other state laws: it frames AI misuse as a civil rights violation, not just a technical compliance failure. It applies to any employer or their agents who uses AI affecting candidates or employees in Illinois, regardless of where the company is headquartered. And it requires genuinely plain-language disclosure — not a buried privacy policy update.

New York City Local Law 144 is more demanding in some ways: it requires an annual independent bias audit before you can use automated employment decision tools, and you have to publish the results.

Colorado's AI law has been comprehensively rewritten. Governor Polis signed SB 189 on 14 May 2026, repealing and replacing the original Colorado AI Act (SB 24-205) entirely. The new law takes effect 1 January 2027.

This is a significant shift in both scope and philosophy. The original law was one of the most demanding in the US , a risk-based governance model closer in spirit to the EU AI Act, requiring mandatory annual impact assessments, risk management programmes, and a duty of reasonable care to prevent algorithmic discrimination. All of that has gone.

What replaces it is a transparency-and-notice framework focused on Automated Decision-Making Technology (ADMT) defined broadly as any tool that processes personal data and uses computation to produce outputs that materially influence consequential decisions, including hiring, promotion, pay and termination

United Kingdom

The UK's approach post-Brexit has been deliberately lighter touch than the EU; "pro-innovation" was the stated position. That posture shifted noticeably in late 2025, and a UK AI framework is expected during 2026, with the AI Safety Institute moving toward a formal legal mandate. For now, the operative framework is UK GDPR (specifically Article 22 rights around solely automated decisions), the Equality Act 2010 (which applies fully to algorithmic discrimination), and ICO guidance on AI transparency.

Article 22 is more significant than many firms realise. If your AI is making solely automated decisions with significant effects on candidates (and there's a reasonable argument that AI-driven shortlisting qualifies) candidates have the right to obtain human review. Your process needs to accommodate that, and your notices need to tell people it exists.

European Union

The EU AI Act explicitly classifies AI used in recruitment and employment management as high-risk. That's not a subjective assessment, it's written into the regulation. High-risk classification triggers a set of obligations that go significantly further than most US state laws: conformity assessments, technical documentation, mandatory human oversight, logging and audit trails, transparency to affected individuals, and registration in an EU database of high-risk AI systems.

Update — May 2026: On 7 May 2026, the EU Parliament and Council agreed to push back the high-risk obligations for employment and recruitment AI from August 2026 to December 2027. The agreement still needs formal ratification, but that's the new planning date. That's a useful extension. It is not a green light to stop preparing.

The delay happened because the technical standards needed for compliance weren't ready in time — not because the obligations are going away. GDPR remains the foundation layer for everything involving personal data, which means all your existing obligations around consent, purpose limitation, data minimisation and rights of access continue to apply right now, regardless of the Omnibus timeline.

For staffing firms operating across multiple EU member states: individual countries are now implementing national AI laws that layer on top of the EU Act. Italy's law (in force October 2025) has additional provisions. More are coming. Keep a watching brief on your key operating market

Asia Pacific (APAC)

The APAC picture is more varied but moving faster than most firms expect.

Australia's Privacy Act review continues; the OAIC has published AI guidance; and Fair Work Act discrimination provisions apply to algorithmic decision-making whether or not there's specific AI legislation.

Singapore's Model AI Governance Framework is voluntary but widely followed by enterprise buyers and is shaping into de facto compliance expectations.

South Korea has passed an AI Basic Act with enforcement from January 2026.

Japan's AI Promotion Act (in force May 2025) takes a lighter, innovation-first approac, but reputational pressure and enterprise buyer expectations create real compliance incentives even without punitive enforcement.

If you're operating in APAC, the safe approach is to apply your global compliance framework - Glass Box statement, vendor diligence, human oversight - and stay close to local developments. The APAC market is moving from voluntary to mandatory faster than most people anticipated twelve months ago.

The hard conversation...

Vendor accountability: don't outsource your risk

This is the part of the conversation that most AI vendors would rather you didn't have. So let's make sure you do.

In Illinois, Colorado, New York and the EU, the compliance obligation falls on you (the employer or their agent) not primarily on your AI vendor. Your vendor built the tool. You deployed it. Any infringement of candidate's rights is your risk. When a complaint lands, the first question from a regulator or a lawyer won't be "which vendor did you use?" It'll be "what did you tell this candidate about how AI was used in their application?"

That creates a very specific obligation: you need to know what your vendors' tools actually do. Not what the marketing brochure says. Not what the onboarding slide deck implied. What the tool actually does: which decisions it influences, what data it processes, what protected characteristics might be in scope, and what the vendor can prove about how the model behaves.

Here are the questions worth asking every AI vendor before you deploy, or renew.

If a vendor can't answer these questions, or gives you vague reassurances that don't point to specific documentation, that's valuable information. It doesn't necessarily mean the tool is non-compliant. It does mean the compliance risk sits entirely with you.

-

Which specific employment decisions does your tool influence; screening, ranking, scoring, matching, something else?

-

What personal data does it process & does that include any data that could be a proxy for a protected characteristic?

-

Have you conducted bias testing? Can you provide the methodology and results?

-

What happens when you substantially update the model? Will you notify us, and how much notice will you give?

-

Do we inherit compliance obligations as a user? What documentation can you provide to support our compliance?

-

Does your tool comply with Illinois HB 3773, NYC Local Law 144, and the EU AI Act high-risk requirements?

-

What audit trail does your tool generate, and can we access it if a complaint is raised?

-

Are you registered in the EU high-risk AI database (if you're marketing to EU customers)?

A principle that changes everything...

The Glass box: transparency as competitive advantage

Here's a reframe worth thinking about.

Most firms approach AI compliance as a legal risk to minimise. Draft the notice, tick the box, move on. The minimum viable disclosure, the vaguest language that still technically counts. That approach produces what we call frosted glass: technically there, completely opaque. "We may use AI tools in our process" is frosted glass. It says nothing, discloses nothing, and builds no trust.

A Glass Box is the opposite. It's your commitment that if a candidate or employee asks "what is that AI doing with my information and why?", you can actually show them. Not because the law requires it (though increasingly it does) but because it's the right way to treat people, and because candidates and clients are increasingly asking the question.

A good Glass Box statement answers five things in plain language:

What the AI does

In plain terms:

Summarises your CV

Ranks applications against job requirements

Transcribes and analyses our interview calls.

What data it uses

Categories only, you don't need to name every field.

Your CV, covering letter, and interview audio is fine.

All available data is not.

Who makes final decisions

If it's a human, say so. If it's AI ,be honest about that.

Most firms use AI to assist human decision-makers; that's exactly what the notice should say.

Decisions it touches

Does it affect who gets shortlisted? Who gets offered an interview? Who is presented to a client?

Be specific. Vague descriptions are a red flag to regulators.

The fifth element... Who can I talk to?

Most firms miss this final one - a contact point for questions, and an acknowledgement that candidates can request an alternative process. Not all jurisdictions require this yet. All of them are moving toward it and building it in now costs almost nothing. Having to retrofit it later, under complaint, costs considerably more.

The firms that get this right turn compliance into a trust asset. They can tell clients: "here's our AI governance policy, here's our Glass Box statement, here's our bias audit." That's a differentiator in a market where AI scepticism is growing. The firms that get it wrong are the ones whose Glass Box statement is a paragraph of legal hedging that means nothing to anyone who reads it.

We've written a full practical guide to writing your Glass Box statement, including a copy-paste template:

What Kyloe brings to this...

AI compliance isn't just a legal problem. It's a data problem.

The obligations in Illinois, the EU and beyond all assume something: that you know what data your AI is processing. Most Bullhorn databases contain years of legacy data - duplicates, outdated records, missing consent flags, incomplete fields. That data is feeding your AI whether you like it or not. Kyloe's suite of Bullhorn-native tools is directly relevant to building compliance infrastructure that actually holds up.

Your AI is only as compliant as your data

Deduplicate records, identify candidates with missing or outdated consent, clean legacy data before it creates AI compliance risk.

An audit-ready Bullhorn database isn't just good practice, it's the foundation of defensible AI use.

Automate your compliance documentation

Candidate notices, AI disclosure statements, consent documents — all generated and delivered automatically through your Bullhorn workflow.

AwesomeDocs is how you do it

at scale without the manual overhead.

Build your governance framework properly

Not sure where to start? We can audit your current AI footprint, identify your compliance gaps, help you write your Glass Box statement, and build an ongoing governance process into your Bullhorn workflows.

Practical, Bullhorn-specific help.

Not sure where you stand with AI?

Let's find out together.

Straight answers...

FAQs

Does Illinois HB 3773 only apply to firms based in Illinois?

No. It applies to any employer using AI in decisions that affect employees or candidates in Illinois — regardless of where your headquarters are. If you're a London-based staffing firm placing candidates in Chicago, you're in scope. Geography of the candidate is what matters, not geography of the employer.

What if our AI vendor handles the compliance?

Your vendor cannot handle compliance on your behalf. Your vendor is responsible for their tool's performance, documentation and their own compliance obligations. But the notice requirements, the obligation to disclose AI use to candidates, and the liability if something goes wrong? That sits with you as the employer or their agent. Your vendor's compliance documents are an input to yours, not a substitute for them. Always get your vendor's documentation in writing and don't assume their "compliance-ready" claim covers your specific obligations.

What are the actual consequences of non-compliance?

It varies by jurisdiction. In Illinois, AI misuse is framed as a civil rights violation under the Human Rights Act (complaints go to the IL Department of Human Rights). There's no specific fine in the text of HB 3773, but civil rights violations carry significant legal and reputational exposure. In New York City, fines are up to $1,500 per violation per day. Under the EU AI Act, penalties for high-risk AI violations can reach €30 million or 6% of global annual turnover, whichever is higher. Enforcement actions against AI deployers increased significantly in 2025, and a 42-state attorney general coalition in the US has signalled coordinated enforcement pressure. The direction of travel is toward more enforcement, not less.

Will a US federal law override state laws?

It's possible, eventually. The December 2025 Executive Order created an AI Litigation Task Force to challenge state AI laws inconsistent with a national framework. A bill has been introduced to block the challenge. The outcome is genuinely uncertain. What isn't uncertain: state laws are enforceable right now, until they're formally struck down, which will take time even if a challenge succeeds. Build your compliance programme for what's live today, not for a federal intervention that may or may not happen.

Is AI making recruitment better? Isn't this all a bit anti-technology?

Often yes it makes recruitment better, and no, it is not anti-tech.

AI in recruitment can genuinely reduce time-to-hire, improve consistency, and, done right, actually reduce some forms of human bias. The question isn't whether to use AI in recruitment, it's about how you use it and doing so with your eyes open. The reason regulations exist is that AI can also encode historic bias at scale, in ways that are harder to spot than individual human bias and much harder to challenge. Transparency, oversight and accountability don't slow down good AI use, they make it defensible. That's better for your candidates and better for your business.

What's the single most important thing I should do?

List every AI tool touching your hiring or employment process. For each one, ask your vendor: what decisions does your tool influence, and what data does it use? If they can't answer clearly, that's your most pressing risk. If they can, use that information to start building your Glass Box statement and updating your candidate notices. One afternoon of mapping will tell you more about your compliance position than six months of reading about it.

This guide is for informational purposes only and does not constitute legal advice. Always consult a qualified legal professional for advice specific to your circumstances. Last updated: 20 May 2026.